Photon mapping with final gathering and stratified importance sampling was implemented on CUDA this week. I also tried a crude implementation of irradiance caching on the GPU using a divergence field based cache position selection which I came up with myself, it still needs further improvement, and I will try to post that next week. Now, without irradiance caching, with 800x600 resolution, 100k photons and 200 final gather rays per pixel, rendering a frame takes ~ 15-20 secs. This is far from interactive, but since I uses a incremental based approach where intermediate results are shown, the system is far more responsive in quickly visualize results.

The following is a screen shot of the current renderer. With 1024 by 768 resolution, 100k photons and 260 final gather rays per pixel, It's fully rendered in 25 seconds.

The following video showcases the current state of the renderer. Note that when the light source is moved, the reponse is pretty slow (~1fps). This is because we need to precompute irradiance at each photon position to accelerate the final gather. Also we shoot 100k photons. With 10k photons, we get around 10-15fps when moving the light source and similar rendering results.

Sunday, October 31, 2010

Sunday, October 24, 2010

CUDA kd-tree builder is ready and needs to be further optimized.

I mentioned in last week's post that I have a three-step plan for the kd-tree builder. Now the plan has changed somewhat: I came up with a segmentation/binning based algorithm which I thought would substitute Kun Zhou's. I successfully implemented the CUDA kd-tree builder among other features.

So in brief summary form, the algorithm is as follows:

1. We begin with a list of triangles and a root kd-tree node containing all the triangles. Each item in the triangle list has two entries: the triangle id and the node that it belongs to. We don't yet store the triangle list for each node in the nodes themselves yet.

2. We have a list of active nodes to work on each time, the active nodes are nodes to be split. To start, active nodes list only contains the root node.

3. While active node list is not empty,

3a. We divide each node in the active list along the split axis into a predefined number of segments. Place a minimum bin and a maximum bin for each segment.

3b. For each triangle in parallel, determine its starting/ending segment in the nodes it belongs to. Add to the min bin where its starting position belongs to. Do similar to the max bin.

3c. Now we apply segmented prefix-sum scan (provided by CUDPP) on the min max bins list for all the nodes. Now we can easily get the # of triangles to the left/right of each of the segment.

3d. For each segment in parallel, compute the SAH at that segment position.

3e. Apply parallel reduction with min operator to arrive at the min SAH for each node.

3f. For each node in parallel, conditionally split itself along the segment position with min SAH.

3g. For each triangles in parallel, do a triangle/box test to see whether it goes to the left or right node. If it belongs to one of the node, simply re-point to the child node it belongs to. If it belongs to both nodes, we need to create an additional entry in the triangle list to accommodate it. The newly created nodes will be the new active node list.

11. Now we need to rearrange the triangle list so it lists the triangles for each node in consecutive entries. This can be done by first doing a prefix sum scan on the node's triangles count so that we know where the triangle list for each node would start in the big list. Then for each triangles in parallel, put the triangles in to the arranged list. I just needed to be careful to use atomic operations to avoid race conditions.

The renderer now also supports texture mapping with bilinear filtering and bump mapping. For the Sponza scene with 77K triangles, with precomputed kd-tree, we get ~20fps on 800x600 scene. If we re-build the kd-tree for every frame, we still get 4-5 fps, which is not bad considering the fact that there's still room for optimization. The two videos below show the rendering result, with and without kd-tree per frame re-computation.

For the coming week, I will apply the kd-tree building algorithm to photon mapping (with VVH as opposed to SAH for split cost), and get the final gathering working (hopefully it can be interactive). I've already started on this, and will keep everyone posted.

So in brief summary form, the algorithm is as follows:

1. We begin with a list of triangles and a root kd-tree node containing all the triangles. Each item in the triangle list has two entries: the triangle id and the node that it belongs to. We don't yet store the triangle list for each node in the nodes themselves yet.

2. We have a list of active nodes to work on each time, the active nodes are nodes to be split. To start, active nodes list only contains the root node.

3. While active node list is not empty,

3a. We divide each node in the active list along the split axis into a predefined number of segments. Place a minimum bin and a maximum bin for each segment.

3b. For each triangle in parallel, determine its starting/ending segment in the nodes it belongs to. Add to the min bin where its starting position belongs to. Do similar to the max bin.

3c. Now we apply segmented prefix-sum scan (provided by CUDPP) on the min max bins list for all the nodes. Now we can easily get the # of triangles to the left/right of each of the segment.

3d. For each segment in parallel, compute the SAH at that segment position.

3e. Apply parallel reduction with min operator to arrive at the min SAH for each node.

3f. For each node in parallel, conditionally split itself along the segment position with min SAH.

3g. For each triangles in parallel, do a triangle/box test to see whether it goes to the left or right node. If it belongs to one of the node, simply re-point to the child node it belongs to. If it belongs to both nodes, we need to create an additional entry in the triangle list to accommodate it. The newly created nodes will be the new active node list.

11. Now we need to rearrange the triangle list so it lists the triangles for each node in consecutive entries. This can be done by first doing a prefix sum scan on the node's triangles count so that we know where the triangle list for each node would start in the big list. Then for each triangles in parallel, put the triangles in to the arranged list. I just needed to be careful to use atomic operations to avoid race conditions.

The renderer now also supports texture mapping with bilinear filtering and bump mapping. For the Sponza scene with 77K triangles, with precomputed kd-tree, we get ~20fps on 800x600 scene. If we re-build the kd-tree for every frame, we still get 4-5 fps, which is not bad considering the fact that there's still room for optimization. The two videos below show the rendering result, with and without kd-tree per frame re-computation.

For the coming week, I will apply the kd-tree building algorithm to photon mapping (with VVH as opposed to SAH for split cost), and get the final gathering working (hopefully it can be interactive). I've already started on this, and will keep everyone posted.

Sunday, October 17, 2010

kd-tree CPU builder and GPU-based tracer is ready

In the past week, I added significant amount of code to my renderer, most of which pertained to kd-tree construction and traversal. I read through Kun Zhou's paper on GPU based kd-tree construction, and decided that the paper could be implemented in the following steps:

1) Make sure the kd-tree tracer (traversal) is working. To achieve this, I first implement a CPU based kd-tree builder with data structure optimized for CUDA. Then a GPU based tracer that utilizes the resulting kd-tree should be implemented. At this point, I should have a kd-tree CPU builder and GPU tracer.

2) Substitute a GPU kd-tree builder for the CPU one built in the first step. The output data structure should be in sync so that we don't need to change (hopefully) the GPU tracer in step 1. The GPU kd-tree builder should implement the Small Node stage according to Zhou's paper.

3) Implement the Large Node stage as proposed in Zhou's paper. This is trickier than Small Node Stage, but hopefully this is going to provide worthwhile performance boost.

So for this week Step 1 is done. I set up a team foundation server project so that I can keep track of different versions of the code which is very useful. For the actual kd-tree implementation, to make the data readily available to the GPU (and later to the GPU kd-tree builder), the kd-tree nodes, triangle lists and split position lists are pre-allocated chunks of memory. For example, KdTreeNode class looks like the following:

It is packed into one float4 value. Left is the index of the left child in the pre-allocated array of KdTreeNodes, and the right child is simply left + 1. The integer tri points at the head index of the triangle list of the node in the pre-allocated triangle list array. The triangle list and split position lists are two big linked lists. Whenever some resource is requested, an element is fetched from the next available element in the lists, and gets returned to the "pool" once it's no longer used. This way, we only need to allocate the memory once.

The GPU tracing part is a stacked-based kd-tree traversal implementation according to Zhou's paper. However, the triangle list isn't optimized into pre-order traversal order yet. This can be done in Step 2 & 3.

For the next week, I plan to complete Step 2 at the minimum, and optionally Step 3 if time permits. Moreover, I will implement more intuitive movement control by using keyboard and let the obj loader support texture mapping.

The following is a video showcasing the current state of the tracer. I used goldfish.obj with 23k triangles. After kd-tree construction using the CPU with 13 maximum levels and 9516 kd-tree nodes, the ray-tracer achieved still a stable 60fps (max FPS for the monitor) with 2 levels of reflection. Pretty satisfactory result for now.

1) Make sure the kd-tree tracer (traversal) is working. To achieve this, I first implement a CPU based kd-tree builder with data structure optimized for CUDA. Then a GPU based tracer that utilizes the resulting kd-tree should be implemented. At this point, I should have a kd-tree CPU builder and GPU tracer.

2) Substitute a GPU kd-tree builder for the CPU one built in the first step. The output data structure should be in sync so that we don't need to change (hopefully) the GPU tracer in step 1. The GPU kd-tree builder should implement the Small Node stage according to Zhou's paper.

3) Implement the Large Node stage as proposed in Zhou's paper. This is trickier than Small Node Stage, but hopefully this is going to provide worthwhile performance boost.

So for this week Step 1 is done. I set up a team foundation server project so that I can keep track of different versions of the code which is very useful. For the actual kd-tree implementation, to make the data readily available to the GPU (and later to the GPU kd-tree builder), the kd-tree nodes, triangle lists and split position lists are pre-allocated chunks of memory. For example, KdTreeNode class looks like the following:

struct KdTreeNode

{

union

{

struct

{

float split;

int axis, left, tri;

};

struct

{

float4 __data;

};

};

};

{

union

{

struct

{

float split;

int axis, left, tri;

};

struct

{

float4 __data;

};

};

};

It is packed into one float4 value. Left is the index of the left child in the pre-allocated array of KdTreeNodes, and the right child is simply left + 1. The integer tri points at the head index of the triangle list of the node in the pre-allocated triangle list array. The triangle list and split position lists are two big linked lists. Whenever some resource is requested, an element is fetched from the next available element in the lists, and gets returned to the "pool" once it's no longer used. This way, we only need to allocate the memory once.

The GPU tracing part is a stacked-based kd-tree traversal implementation according to Zhou's paper. However, the triangle list isn't optimized into pre-order traversal order yet. This can be done in Step 2 & 3.

For the next week, I plan to complete Step 2 at the minimum, and optionally Step 3 if time permits. Moreover, I will implement more intuitive movement control by using keyboard and let the obj loader support texture mapping.

The following is a video showcasing the current state of the tracer. I used goldfish.obj with 23k triangles. After kd-tree construction using the CPU with 13 maximum levels and 9516 kd-tree nodes, the ray-tracer achieved still a stable 60fps (max FPS for the monitor) with 2 levels of reflection. Pretty satisfactory result for now.

Friday, October 8, 2010

Current status on my existing photon tracer and new CUDA RTRT

This past week was spent in learning and setting up CUDA, stabilizing and rendering using my existing photon tracer and writing a simple CUDA ray tracer.

For the existing photon tracer (ktracer) I wrote last semester for CIS-660 (Advanced Topics in Computer Graphics), it is able to photon trace any given triangular mesh scene in 3DS format. It is able to merge multiple 3ds files into a single scene. It uses hierarchical uniform grid as acceleration structure, supports multi-threaded rendering and uses SIMD intrinsic on low-level computation. For photon mapping part, irradiance caching is done on the photons so that final gathering could be greatly accelerated. Here's some result of the current ktracer:

This is a photon traced image of the well-known Cornell Box which I made with 3DS MAX. With 50 area light samples and 50 final gather samples, the final image with 640x640 resolution is rendered in 16.562s.

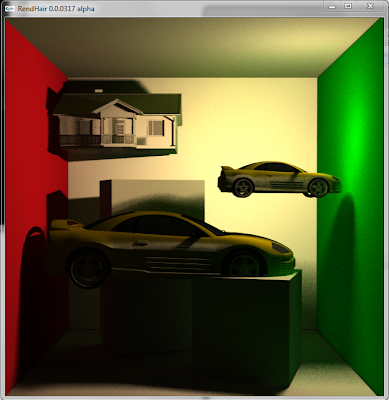

To showcase the ability to take advantage of the uniform grid, two car models and a house model is added to the scene. Now with 65K triangles and the same rendering settings, the above image is rendered in 27.43s.

The existing ktracer provides a baseline performance. It is still far from being interactive. In order to be interactive, the bottom scene needs to be rendered in ~1fps. In other words, without final gathering and using only one sample per pixel, we should get at least 50fps. This will be the performance target for my senior project.

For the rest of the week, I read a number of CUDA/raytracing tutorials. Here's a list of them that I found particularly helpful:

CUDA, Supercomputing for the Masses (http://www.drdobbs.com/high-performance-computing/207200659;jsessionid=XNULCSWKTFGIZQE1GHPCKH4ATMY32JVN)

Triers CUDA Raytracing Tutorial (http://cg.alexandra.dk/2009/08/10/triers-cuda-ray-tracing-tutorial/) They provided some great skeletal code for starting to write a ray tracer. Part of my project is based on the CUDA/OpenGL code of the tutorial.

NVIDIA CUDA C Programming Guide. This is the official guide with up-to-date information.

I set up my newly built test bench with i7-950 processor, 4GB memory and GeForce GTX 470 graphics card. Then I downloaded and installed CUDA Toolkit and GPU Computing SDK 3.2 RC. I also found that the latest 1.5 RC version of Parallel NSight, which supports Visual Studio 2010, is open to Beta program participants. So I applied to their program and soon got admitted. In my opinion, the folks in NVIDIA have done a really good job integrating with VS2010. Also, with a host and a target machine, we can enable remote debugging of CUDA programs with easy operations such as setting breakpoints, a very neat feature.

After quite some time of coding, I finished the initial ray tracer that is able to load OBJ files with MTL support. It currently doesn't have any acceleration structures, but for the Cornell Box scene, it renders it very smoothly at over 60 fps. I started off porting the 3DS loader from my previous renderer to the new one, but ended up getting all kinds of compiler errors. Eventually, I gave up that idea and instead wrote an OBJ loader and used 3DS MAX to export the file to OBJ format. A screenshot and a video below showcases the current renderer:

Here is a video showing some real-time camera movement:

For the existing photon tracer (ktracer) I wrote last semester for CIS-660 (Advanced Topics in Computer Graphics), it is able to photon trace any given triangular mesh scene in 3DS format. It is able to merge multiple 3ds files into a single scene. It uses hierarchical uniform grid as acceleration structure, supports multi-threaded rendering and uses SIMD intrinsic on low-level computation. For photon mapping part, irradiance caching is done on the photons so that final gathering could be greatly accelerated. Here's some result of the current ktracer:

This is a photon traced image of the well-known Cornell Box which I made with 3DS MAX. With 50 area light samples and 50 final gather samples, the final image with 640x640 resolution is rendered in 16.562s.

To showcase the ability to take advantage of the uniform grid, two car models and a house model is added to the scene. Now with 65K triangles and the same rendering settings, the above image is rendered in 27.43s.

The existing ktracer provides a baseline performance. It is still far from being interactive. In order to be interactive, the bottom scene needs to be rendered in ~1fps. In other words, without final gathering and using only one sample per pixel, we should get at least 50fps. This will be the performance target for my senior project.

For the rest of the week, I read a number of CUDA/raytracing tutorials. Here's a list of them that I found particularly helpful:

CUDA, Supercomputing for the Masses (http://www.drdobbs.com/high-performance-computing/207200659;jsessionid=XNULCSWKTFGIZQE1GHPCKH4ATMY32JVN)

Triers CUDA Raytracing Tutorial (http://cg.alexandra.dk/2009/08/10/triers-cuda-ray-tracing-tutorial/) They provided some great skeletal code for starting to write a ray tracer. Part of my project is based on the CUDA/OpenGL code of the tutorial.

NVIDIA CUDA C Programming Guide. This is the official guide with up-to-date information.

I set up my newly built test bench with i7-950 processor, 4GB memory and GeForce GTX 470 graphics card. Then I downloaded and installed CUDA Toolkit and GPU Computing SDK 3.2 RC. I also found that the latest 1.5 RC version of Parallel NSight, which supports Visual Studio 2010, is open to Beta program participants. So I applied to their program and soon got admitted. In my opinion, the folks in NVIDIA have done a really good job integrating with VS2010. Also, with a host and a target machine, we can enable remote debugging of CUDA programs with easy operations such as setting breakpoints, a very neat feature.

After quite some time of coding, I finished the initial ray tracer that is able to load OBJ files with MTL support. It currently doesn't have any acceleration structures, but for the Cornell Box scene, it renders it very smoothly at over 60 fps. I started off porting the 3DS loader from my previous renderer to the new one, but ended up getting all kinds of compiler errors. Eventually, I gave up that idea and instead wrote an OBJ loader and used 3DS MAX to export the file to OBJ format. A screenshot and a video below showcases the current renderer:

Here is a video showing some real-time camera movement:

Sunday, October 3, 2010

Interactive Global Illumination of Indoor Scenes with Photon Mapping - A Brief Overview

Efficiently and realistically rendering the nuances as represented by direct and indirect lighting effects of indoor scenes has always been a challenging task in computer graphics. Especially, scenes with moveable light sources

and camera position deserve particular attention as rendering those scenes efficiently would enable animators and designers to quickly inspect architectures from different perspectives and lighting settings.

and camera position deserve particular attention as rendering those scenes efficiently would enable animators and designers to quickly inspect architectures from different perspectives and lighting settings.

Based on this requirements, I propose a fast, robust and realistic renderer with global illumination capabilities specifically for indoor scenes, by coupling kd-tree and coherent grid acceleration structures. The renderer uses regular ray-tracing for direct illumination and reflection, and uses photon map final gathering for indirect illumination. The scene comprises mostly static background objects and limited moving objects. Whenever a change in light sources or camera position is required after a given time point, spatio-temporal coherence is exploited so that only a fraction of the work done for the previous frame is needed for rendering a new frame.

To best utilize kd-tree's fast look-up property and coherent uniform grid's fast reconstruction property, we use the former for static background scene and the latter for moving objects. To improve rendering responsiveness, interim rendering results are displayed during the final gathering process; moreover, photon mapping pre-computation could be used. This should dramatically reduce the required final gathering samples. Lastly, CUDA will be utilized to better parallelize the rendering process. This project is an extension to and further research on my previous work on efficient ray-tracing. Built on the framework of my existing photon tracer, this high quality renderer should be able to deliver impressive scene fly-by experiences and speed up the workflow of creating and rendering indoor architectures.

Subscribe to:

Posts (Atom)